Throughout history, there has been a panic, a ‘technophobia’, that spreads across industries every time a new technology arrives and promises to do what humans do - only faster, cheaper, and without the inconvenience of the employee having what we humans call ‘free will’, because of which results may be variable - from a productivity POV. We have watched it happen with the introduction of the printing press in the 15th century, with the Industrial Revolution, with computers, then apps like Photoshop, and now we are watching it happen all over again with artificial intelligence, except that this time the volume is louder, the stakes are higher, and frankly, some of the fear is completely justified while the rest of it is just noise dressed up as principle.

Imagine though, if the printing press never became popular and faded away amidst the public outrage. Or the Industrial Revolution - can you imagine a world where fashion and art isn’t accessible to all? Doesn’t that sound almost barbaric?

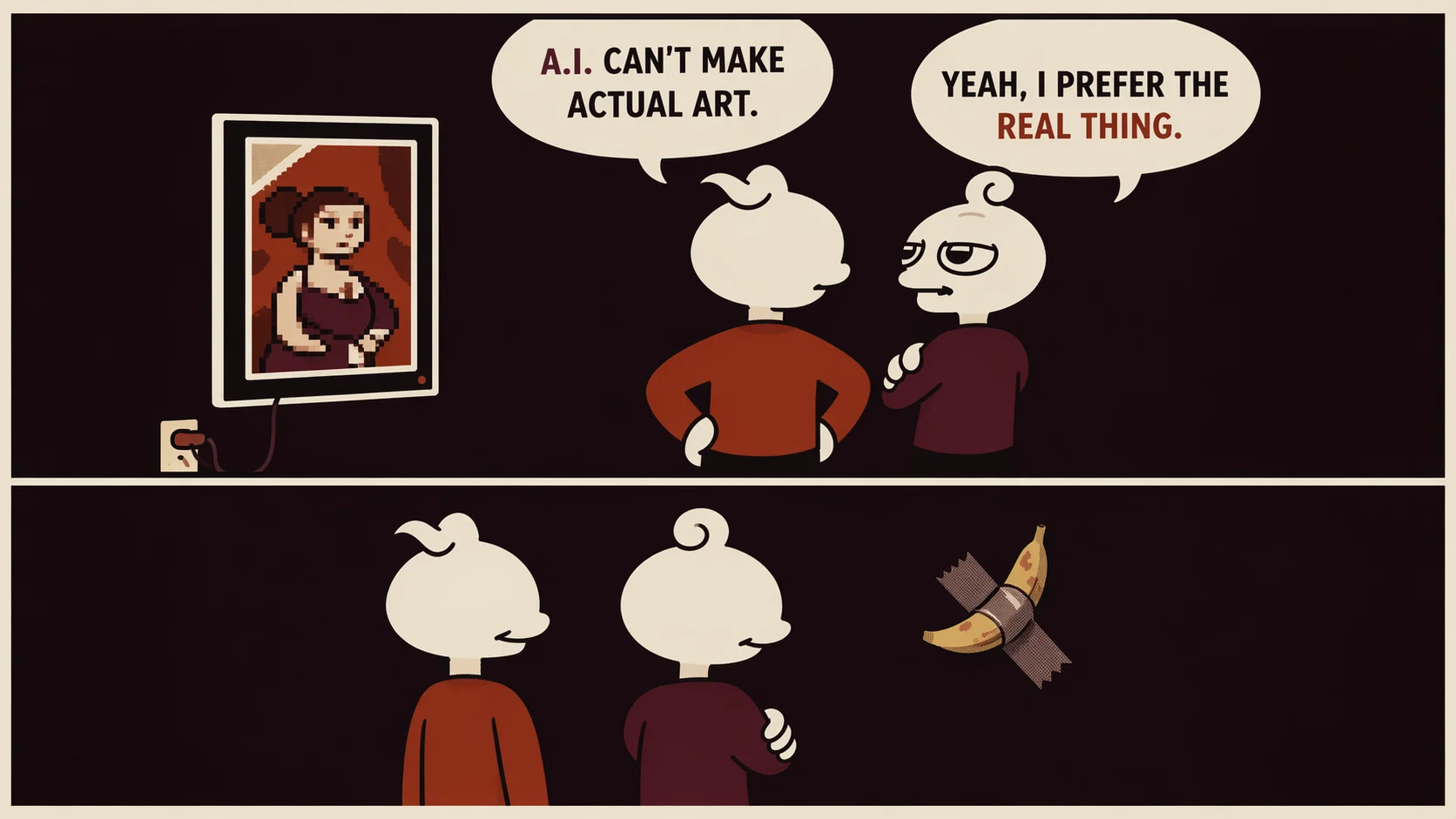

The noise tends to come from two directions. On one side, there are the absolutists who have decided that AI is an existential affront to human creativity, a plagiarism engine built on stolen data that produces soulless slop. On the other, there are the accelerationists who have discovered that you can now approximate the output of an entire creative team with a subscription and a good enough prompt, and who have quietly decided that approximation is sufficient. Both positions are intellectually dishonest, and both will prove, in different ways, to be commercially self-defeating.

What is actually happening in creative industries right now is something more complicated and more interesting than either camp is willing to acknowledge. It is, as Aditya Mani's original framework for this piece argues, a reorganisation; a fundamental structural shift in how ideas are generated, how images are produced, how influence accumulates, and how creative authority is distributed. The central question is no longer whether AI belongs in creative work, because it already does, whether we like it or not. The question, the only one worth asking seriously, is how we approach ethics, ownership, and creative responsibility within this new reality.

The Panic Is Valid. The Target Is Wrong.

When Valentino, Balenciaga, and Gucci released AI-generated campaign imagery in 2023 and 2024, the comment sections turned ferocious. People were not angry because the images were unattractive. Most of them were technically flawless, almost eerie in their perfection. But people were angry because they understood intuitively what had been erased from the frame: a photographer, a casting director, a stylist, a model, a whole ecosystem of human labour and collaboration reduced to a prompt and a render. That anger is legitimate. It points at something real about the way AI has been deployed by corporations as a cost-elimination strategy, a way of gutting the middle of the industry while protecting the executive layer that made the decision. The whole creative foundation of the fashion industry, which is aspirational to so many.

AI Generated Campaign Visuals by Gucci

Santu Misra, an Indian creative director who returned to education mid-career and completed his MA at a point when his batch was overlapping with a new intake where, as he puts it, seventy percent of creative direction was already being done with AI, describes the reaction with a weary recognition. He has watched this before.

"The same thing happened when digital photography came, when Photoshop came. The idea that people are hating is not that it's made from AI. The idea people are hating is that it's taking away jobs from us - from writers, editors, stylists, creative directors. That is what is happening."

He goes further, with a candour that cuts through the usual hand-wringing: "More and more people are going to lose their gig. Brands want to optimise volume. They want one person to subscribe to AI, automate everything, and get an editor to clean it up. As if it works like that." It often does not. But more importantly, his observation points to a structural reality of this moment that creative industries need to sit with honestly rather than glaze over with optimism: the disruption to mid-level creative employment is not hypothetical. It is already underway, and no amount of hopeful rhetoric about human irreplaceability is going to alter that trajectory for those who have not developed a practice deep and specific enough to justify their continued existence in the production chain.

But the response that many creatives have reached for - refusal, the performance of principled non-engagement - is not a solution. It is a postponement. Sujata Assomull, founding editor of Harper's Bazaar India, fashion journalist and author, has watched enough cycles of creative disruption to know that the only productive response is adaptation, not resistance for its own sake.

"Artificial intelligence is perhaps the most significant disruption journalism has faced since the dot-com era, when there was widespread concern that traditional newspapers and magazines would become obsolete. That period was a valuable training ground - it forced us to adapt, to think digitally, and to become more agile in how we tell stories."

The parallel is instructive. The editors and writers who thrived after the internet did not do so by pretending the internet was not happening. They did so by understanding what it could and could not do, using it to reach audiences they could never have reached before, and doubling down on the kinds of long-form, deeply reported, contextually rich work that a news aggregator could not replicate. The same logic applies now, and the creatives who understand that are already three moves ahead of the ones still debating whether to engage.

What AI Actually Does, and Where It Runs Out of Road

There is wishful thinking on both sides of this debate, and it is worth clearing away the mythology before trying to think clearly about where the real stakes lie. Generative AI is genuinely extraordinary at certain things. It can compress time dramatically. It can assist with research, pattern-finding, iteration, copy editing, ideation at scale, and the kind of administrative and operational labour - scheduling, documentation, coordination, translation - that has historically consumed enormous amounts of creative bandwidth. Archana Jain, founder and CEO of PR Pundit Havas Red, describes the practical shift in her industry with precision:

"AI allows us to gather insights and trends, cluster conversations with cohort analysis, and suggest pertinent narratives. It is enhancing the speed at which we generate briefs, outlines, analysis and translations. This compresses the time taken, enabling speed to market."

That is a real and valuable capability. But Jain is also clear about what remains irreplaceable in the human-led communications she oversees: "Meaningful content with context. A narrative crafted with meaning, not just messages." The distinction she is drawing - between messages and original thought behind them - is the most important distinction in this entire conversation, and it is perhaps one that the industry keeps collapsing in both directions. Brands that outsource their entire creative output to AI lose authenticity. Professionals who refuse to use AI for the work it genuinely handles well waste the time and cognitive energy they could be spending on creative output that actually requires their human self.

What AI cannot do is invent originality. It cannot replicate the lived experience that makes a piece of writing specific rather than generic, that makes a photograph feel true rather than technically accomplished, that makes a collection feel like it came from archival references, rather than from everywhere. Pratishtha Dobhal, former Editor-in-Chief of Cosmopolitan India and a creative technology evangelist, who has thought hard about where these lines actually fall, is direct about the limits of what the technology can currently achieve: "It can replicate ideas and prompts but so far cannot replicate human consciousness which evolves with lived experiences." She also flags something that should alarm anyone paying attention to the pace of development: technological singularity, she notes, may be deemed hypothetical, but it remains the most dangerous stage of development that no one can afford to arrive at unprepared.

Sujata Assomull puts it in terms that any dedicated journalist or creative will immediately recognise: "When it comes to intelligent, nuanced storytelling, there is still no substitute for human insight - particularly that of a seasoned journalist who has spent years reporting, observing, and understanding context." AI, she argues, produces output with a recognisable, formulaic quality. It performs well enough for certain audiences in those formats, but the work that actually endures, that changes how people think or feel or see, still requires the kind of editorial judgment that can only be built through years of sustained attention to the world.

The Aesthetic Convergence Problem

One of the most under-examined risks of the current AI moment is not job displacement or intellectual laziness, though both are real. It is aesthetic convergence: the tendency of generative systems, built to optimise for clarity, coherence, symmetry, and refinement, to produce work that over time begins to look and sound and feel like everything else. Perfection, when it is generated at scale and at speed, stops being interesting. It becomes ambient. And in a media environment already saturated with content, ambient perfection is just sophisticated noise.

Mani's original essay captures this precisely: as algorithmic recommendation and generative design scale, aesthetic distinction becomes harder to sustain. Predictability follows efficiency. Visual language stabilises. Voices homogenise. Narratives repeat. You can already see this happening in fashion specifically, where the AI-generated campaign imagery produced by major houses in the past two years has a remarkable sameness to it - technically impeccable, conceptually vacant, utterly interchangeable. The tools optimise for the visual grammar of what already exists, which means they are constitutionally incapable of producing the genuinely new.

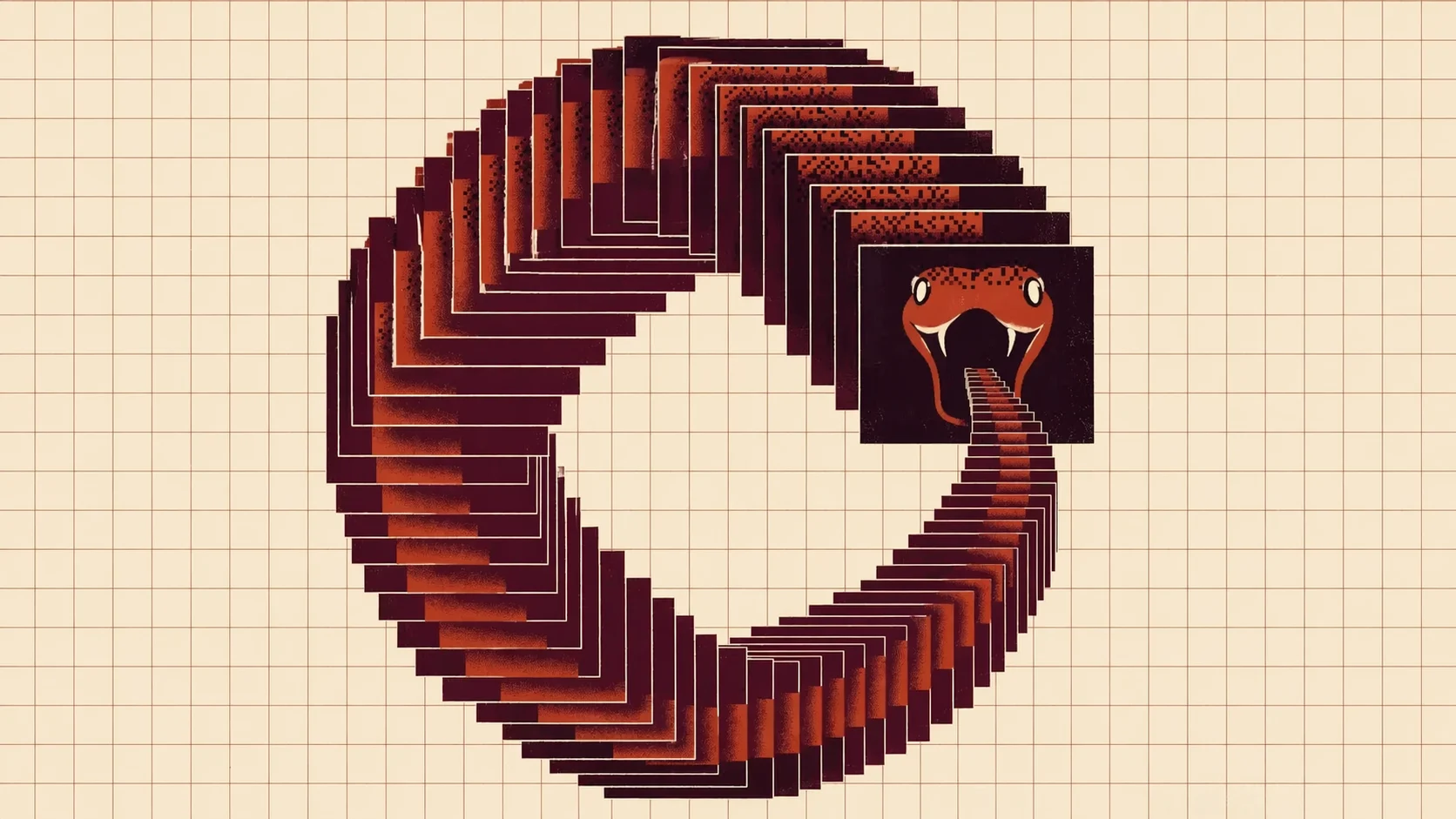

A Large Language Model (LLM) only learns from data that already exists. Whether created by humans or machines, it learns from what already is - so automatically, what is to be in the future, will be just a copy of a copy of a copy, of what has already been. About 57% of all text on the internet today is AI-generated. According to Europol reports, 90% of online content, including social media, is projected to be synthetic by end of 2026.

It’s like an ouroboros - the word itself comes from the Greek drakon ouroboros, meaning "snake devouring its tail". A commonly used symbol in pop culture today, which, in my opinion, is a perfect abstract visualisation of how I see the AI age, both in a philosophical and aesthetic sense.

Amongst those who are of the same opinion this piece is based on, this phenomenon is being called 'model collapse', referring to the danger of AI models being trained on data generated by other AI models, which causes the AI to lose touch with the richness of human language, leading to homogenised, formulaic, and eventually nonsensical outputs - simply put, this is the story of the birth of AI Slop, as the cool kids call it.

I interpret this symbol in two ways: one, the snake devouring its own tail (as in the Greek) signifying destruction, and two, the snake shedding its skin, signifying rebirth. It means creation and destruction simultaneously, and can be used to denote a process which when done right, leads to rebirth and improvement, but when done wrong, leads to destruction. In a more symbolic sense, this self-consumption of AI may not simply be destructive, but could be a necessary process of "life, death, and renewal," potentially leading to new forms of artificial intelligence that are less reliant on existing data models.

Santu Misra observes that the countermovement is already underway as a direct response to this convergence. "Every big photographer is shooting film again," he notes. "It was all digital and now people have started going back to analogue. The more AI will rise, the more the leaders of the pack - a Chanel, an Hermes - will double down on handcraft, artisanal work, artists." He is describing something important: that the luxury signal in a world of abundant AI-generated content shifts decisively toward the traceable and the imperfect. Friction becomes meaningful. An uneven texture, an idiosyncratic choice, a visible hand - these are no longer signs of imperfection, rather, the imperfections are themselves evidence of original authorship.

This is not merely an aesthetic observation. It is an economic one. In Mani's formulation, when generation is abundant, interpretation becomes scarce, and scarcity is where value lives. The next cultural economy will not reward those who can produce the most content. It will reward those who can interpret, contextualise, and imbue that content with the kind of specific meaning that only comes from a specific life, a specific sensibility, a specific set of commitments.

The Indian Context: A Different Set of Stakes

The global conversation about AI and creativity tends to be dominated by Western, specifically American, anxieties and frameworks, and it is worth pausing to think about what the AI moment means specifically in an Indian creative context, which is both more complicated and more consequential than it is usually given credit for.

India's creative industries sit at a fascinating intersection. On one hand, the country has one of the youngest and most digitally native creative workforces in the world, with a generation of designers, stylists, photographers, and editors who came of age alongside social media and who have an intuitive fluency with digital tools that older practitioners often lack. On the other, the structural infrastructure of the Indian creative economy - the access to training, the formal education pathways, the institutional support systems - has historically been far more uneven than in Western markets, which means that AI's capacity to democratise access to professional-grade tools carries a different weight here, with India’s rich and extensive heritage.

Pratishtha Dobhal points to a landmark legal development that is particularly significant in the Indian context: the Delhi High Court's 2023 ruling granting actor Anil Kapoor detailed protection against the unauthorised use of his name, voice, image, and persona by AI systems. "These are small endorsements of authorship," she says, "which need to be addressed at scale, democratically and judiciously." The ruling matters not just as a precedent for celebrity protection but as a signal that Indian courts are beginning to grapple with the question of what ownership means in an era of generative AI - a question that has enormous implications for every creative practitioner whose work might be used, without consent or compensation, to train the next generation of models.

Misra, who consults widely across Indian publications and brands, speaks to the economic reality facing young Indian creatives with particular frankness. He describes a job market in which brands are increasingly asking for one person who can manage an AI subscription and do the work that previously required a team, and he is honest about where that leaves emerging talent: "Unless they have something to say, they are just not making a dent. The middle designers are actually making a dent. Where younger designers are going to land, I honestly don't know." That is a sobering assessment from someone with fifteen-plus years of industry experience, and it points to the urgency of having an honest conversation in Indian creative education about what skills actually matter now and what the responsible deployment of AI actually looks like in practice.

At the same time, the Indian fashion and media landscape has always operated at a different pace and with a different logic than the global mainstream, and there is something in that distinctiveness that may prove to be a structural advantage. The premium placed on craft traditions, on regional aesthetics, on the kind of deeply contextual cultural knowledge that cannot simply be scraped from the internet and fed into a training dataset, means that the most interesting and most durable Indian creative work already has a quality of specificity that AI cannot replicate. The question is whether the industry is thoughtful enough to protect and develop that specificity rather than abandoning it in pursuit of efficiency.

The Authorship Question Nobody Wants to Answer Properly

Ownership has become one of the largest unresolved ethical crises of this moment in creative industries and AI, and the conversation around it has been frustratingly partial. The legal frameworks are lagging. The industry norms are largely nonexistent. And the people with the most power to shape both - the major platforms and the large AI developers - have significant financial incentives to keep the question blurry for as long as possible.

Santu Misra confronts the question of who becomes the creator in a world where an algorithm can produce an image or design based on vast datasets of existing creative work with characteristic directness: "The rights will always belong to the creator. AI will copy it. But it cannot steal it. There are laws against recreation, against copy." This is technically true as far as it goes, but the gap between what the law says and what actually happens in practice is significant and growing. There are artists who have entirely stopped posting their work on social media because they do not want it used as training data, and their instinct to protect what they have made is entirely legitimate even if the legal mechanisms to enforce that protection are still being constructed.

Archana Jain offers the clearest practical framework for where the ethical lines in her industry need to sit. "If AI is used in ideation only, it can be an optional notation. But if images and final copy are AI-generated, it must be disclosed in credits. Synthetic personas or photorealistic imagery on social media must be flagged." This seems like a reasonable minimum, and yet it is one that the industry is currently ignoring wholesale. The question of transparency about AI use in creative work is not an abstract ethical debate. It is a question of trust, and trust, once broken at scale, is extraordinarily difficult to rebuild.

Dobhal describes a broader cultural problem that underlies the authorship crisis; the rise of what she calls content fatigue, with seasoned creators and emerging ones alike recycling viral and trending content until everything begins to blur into indistinction. "Plagiarism and overlooking bibliographic details has become widespread," she observes, "owing to the double-edged sword of agility that AI prompts upon use and distribution of content." Her prognosis is that the response will be a renewed premium on quality and ingenuity, along with the emergence of new platforms and communities that set a more ethical creative tone from the outset - which is both hopeful and, given the current state of the attention economy, optimistic in a way that requires active effort to make true.

The Intellectual Complacency Problem

Here is the argument that does not get made enough, perhaps because it is uncomfortable: the most serious long-term threat of AI in creative industries is not job displacement, however real that is, and it is not aesthetic homogenisation, however visible that is. It is intellectual complacency. The slow, comfortable erosion of the habits of mind - the sustained attention, the rigorous taste, the willingness to sit with difficulty and ambiguity - that make truly original work possible in the first place.

When you can generate a passable first draft in thirty seconds, the temptation to treat that draft as sufficient is real and immediate. When you can produce a mood board that looks exactly like what your reference images look like, the pull toward the already-existing rather than the genuinely new is powerful. When research that once took hours of sustained reading can be compressed into a summary, the deep contextual knowledge that comes from actually living with a subject - following the threads, making unexpected connections, arriving at insights that no one else has arrived at because no one else has followed exactly that path - is the thing that gets abandoned.

Misra raises something even more structural in his observation about what is happening to mass intellectualism: "We don't have mass intellectuals anymore who are shaping millions of people's opinions the way we once did. We have writers on Substack influencing twenty thousand or a hundred thousand people." He finds this hopeful - original thought will always find expression - but the fragmentation he is describing has real consequences for the quality of public discourse and for the accumulation of shared knowledge that has historically been one of the things that distinguishes a vital creative ecosystem from a merely productive one.

The deeper risk, and it is one that Indian creative education in particular needs to take seriously, is that if AI becomes the primary means by which new creatives develop their taste and their skills, what they are actually developing is the ability to navigate and refine AI output, which is a fundamentally different cognitive process from developing a genuine creative practice. Assomull makes this point with a precision that deserves to be considered a directive.

She asserts, "To use AI effectively requires skill. Prompting, discerning what to trust, shaping output into something meaningful are not instinctive abilities. They come from experience. In that sense, AI does not replace expertise - it actually demands it."

Real World: The Case Studies

The theory becomes more legible when you look at what has actually happened in practice. In 2023, the backlash against AI-generated fashion campaigns was swift and vocal, with audiences calling out Valentino, Balenciaga, and Gucci for deploying the technology in ways that felt like a deliberate erasure of the human labour that actually upholds the foundation of the fashion industry. The brands did not recall the campaigns entirely, but they recalibrated. Santu Misra describes the shift as, "They are almost like slowly injecting AI - they are microdosing people with AI, you know? And eventually people will stop fighting." Whether he is right remains to be seen, but the microdosing strategy is real and it is worth watching.

On the other end of the spectrum, the Writers Guild of America strike in 2023 - which had AI regulation at its core - produced one of the first formal industry agreements governing the use of AI in creative production, establishing that AI cannot be used to write or rewrite literary material and that writers must be credited and compensated when their work is used to train AI systems. It was an imperfect agreement and enforcement remains difficult, but it demonstrated that collective action can produce meaningful constraints on how AI is deployed in creative industries, and it established a template that other industries and other jurisdictions will inevitably follow.

In India, the intersection of Bollywood, digital media, and AI has produced its own specific tensions. While the Delhi High Court Ruling as referred by Pratishtha Dobhal earlier was a landmark win for actor Anil Kapoor’s persona and creative rights, the broader question of how India's creative industries protect their practitioners - and particularly their emerging ones - in an AI-accelerated market is one that the industry has not yet had the serious policy consideration it requires. And the industry has an obligation to engage with that process before the technology companies finish writing it for them.

The case of Hermes is instructive from a different angle. While other luxury houses were experimenting with AI-generated content and absorbing the associated reputational risk, Hermes continued to commission artists in the way it always has - producing in-house magazines, social media content and campaigns that are artisanally built around a philosophy that values handcraft and human vision. It's not a sign of Hermes staying ‘old-school’ or sticking to its legacy roots, instead this is a case study in a luxury house understanding that its brand equity is built on a very specific relationship to the human hand and the human eye, and that diluting that relationship even slightly would cost far more in brand value than whatever efficiencies AI might offer. It remains a strategic judgement, not a seemingly moral one, and sets an example for other luxury brands which have the resources and capital to sustain the industry and its creatives.

Ethical AI in Creative Fields: What a Sustainable Future Actually Looks Like

The conversation about ethical AI use in creative industries is still, embarrassingly, at a fairly early stage, partly because the tools are developing faster than the laws and regulations that might govern them, and partly because the people with the most power to shape those laws have a vested interest in keeping them permissive. But the outlines of an ethical and sustainable relationship between AI and creative practice are becoming visible, and they must be articulated clearly.

Archana Jain's framework is a useful starting point as she notes, "AI should be a strategic ally, not used on autopilot. When we stop questioning outputs and lose the ability to think independently, or rely on AI for decisions that require contextual sensitivity, intuition, or ethical consideration, the balance tips into dependency. AI cannot replace human judgement. It must be leveraged to augment it." This is a principle that should be upheld ideally in environments that reward speed and volume over depth and quality.

Now, what does that augmentation look like in practice? It looks like using AI to handle the research scaffold so that the creative mind can spend its energy on the architecture rather than the foundations. It looks like using AI to iterate rapidly through visual possibilities so that the creative director can make faster and more informed decisions about what is worth developing. It looks like using AI for the copy editing pass that catches the errors and inconsistencies that a tired human eye misses, so that the human editor can concentrate on the much harder and more important work of making sure the remains humanly authentic. Dobhal points to AI video editors, AI-assisted content writers, generative AI artists, and AI content creators as roles that will not just survive but grow - not because AI is doing the work, but because humans who understand AI are doing better, faster, more informed work with it.

Disclosure is non-negotiable in any genuinely ethical framework for AI use in creative fields, and the industry needs to stop treating it as optional. If an image is AI-generated, it should clearly be said so. If a campaign brief was developed with AI assistance, it should be noted. If a piece of writing was drafted by a language model and edited by a human, the process should be disclosed. Contrary to what some may think, this is not a confession of intellectual failure. It is an acknowledgment of how contemporary creative production actually works, and the audience - particularly younger audiences who, as Mani notes in his essay, are not asking what is real but searching for what is true - will respect the honesty far more than they will respect the fiction of a purely human origin story.

The question of training data and consent is the ethical issue that the industry has been most reluctant to confront directly, but it is the one that will ultimately determine whether AI becomes a tool that creatives own and direct or one that extracts value from their work without compensation. Dobhal is right that the authorship questions need to be addressed at scale and with democratic legitimacy, not by individual court rulings won by those with the resources to pursue them. An Indian creative practitioner whose textiles, whose visual language, whose regional aesthetic vocabulary has been absorbed into a training dataset without their knowledge or consent has had something plagiarised from them, and the fact that the law has not yet caught up with that reality does not mean the plagiarism did not happen.

Sujata Assomull identifies the critical ethical principle that should govern how AI is used for the mundane and logistical work it actually handles well: "Where AI can be valuable is in supporting the process - fact-checking, copy editing, research assistance. But to use it effectively requires skill. Prompting, discerning what to trust, and shaping output into something meaningful are not instinctive abilities; they come from experience." The implication of this is that creative education needs to change - not by teaching people to use specific tools, which will be obsolete before the course ends, but by teaching the underlying judgment and critical faculties that make any tool, AI or otherwise, useful rather than a threat.

A sustainable future with AI in creative industries looks like this: AI handles the volume, the iteration, the administrative labour, and the first-pass research. Humans handle the vision, the context, the ethics, the conceptualisation, and the judgment calls that require lived experience. The industry develops clear and enforceable norms around disclosure, consent, and remuneration. Creative education shifts its emphasis toward the skills that AI cannot replicate - critical thinking, cultural sensitivity, aesthetic judgment, the ability to draw on personal experience and translate it into work that resonates with others. And creatives, individually and collectively, develop and protect the idiosyncratic sensibilities that no training dataset can simulate because they come from a life, and not from a saturation of already consumed content.

The Only Intelligent Position

Fashion has always operated as an interpretive practice, mediating between self, history, and pop-culture. So has journalism. So has design, and photography, and all of the creative disciplines that are currently wrestling with what AI means for the value and future of their work. The interpretive function - the act of taking what exists in the world and making sense of it, finding the angle, locating the meaning, deciding what matters and why - cannot be delegated to a system that lives in a server and not the real world.

Dobhal says this with the authority of someone who has spent a career as a creative authority navigating the very process, rather honed skills, that truly makes one naturally gifted at their craft. "Innovation, discovery, and curiosity should be the three pillars used by creatives to not become generic talking points. What will set apart the original creators in fashion and lifestyle journalism are those who are audacious enough not to go the tried and tested route." That audacity - the willingness to make a genuinely risky bet, to stand behind a point of view that the algorithm would never have generated because it has no skin in the game - is the thing that AI cannot replicate and the thing that the industry needs to protect and develop in its practitioners.

Mani's framework ends with a formulation that is as close to a manifesto as this conversation has produced: machines produce, humans give meaning. AI can accelerate process. It cannot assign meaning. The responsibility of contemporary creators is not to resist technology, nor to submit to it. It is to engage critically, maintaining authorship, accountability, and intellectual independence. In environments where generation is abundant, interpretation remains scarce. That scarcity defines the next cultural economy.

"The original thought will never not exist, and intellectualism will never not exist.", says Santu Misra, who has watched enough creative cycles to know the difference between disruption and extinction. The generative ocean can grow as vast and as polished as it likes, and the work that comes from a real interior life, from someone who has actually been somewhere and thought something through and had the courage to commit to it, will only become more valuable as everything around it becomes more frictionless and therefore more forgettable. Perfection, produced at scale, is just very expensive mediocrity.

The question that this story serves to answer is deceptively simple: how do creatives adapt without surrendering originality? And to conclude, it is that you use AI the way a skilled craftsperson uses their power tools - to handle the work that does not require your own original thought, so that your creative prowess has more time, energy, and bandwidth to do the work that only it can do. You use the machine on the research, the scheduling, the iteration, the copy editing, the operational scaffolding. And then you show up, fully present and fully yourself, for the work that actually matters. The enemy is not the technology. Don’t trade your vision for convenience - it’s the only thing that will set you apart from the machine.